S3 files converge writes in under 2s

S3 Files converges write conflicts in under two seconds with zero split-brain states. This launch definitively proves that hybrid object-file storage has evolved from a theoretical compromise into a production-ready architecture.

The service operates by maintaining S3 as the authoritative data store while presenting an NFS view, deliberately separating the two worlds to prevent filesystem clients from mutating objects at terrifying frequencies. Unlike legacy FUSE drivers such as s3fs-fuse or goofys, which often resulted in data corruption, this implementation aggregates writes over a fixed 60-second window before committing them as single PUTs. Updates to existing files propagate in just 1.8 seconds, a speed fifteen times faster than new file creation, demonstrating a sophisticated understanding of sync mechanics.

Readers will learn how this distinct architecture outperforms traditional mounting strategies and why the boundary between files and objects remains a critical design constraint rather than a bug. The analysis covers the specific conflict resolution logic that allows simultaneous API and mount writes without failure, and provides a comparative look at why this managed service renders older tools like Mountpoint and s3fs-fuse obsolete for enterprise workloads. As AWS solidifies its 31 percent market share, this engineering shift marks the end of the "S3 is not a filesystem" debate.

The Role of S3 Files in Hybrid Object-File Storage Architectures

S3 Files as an Ephemeral NFS View on S3 Buckets

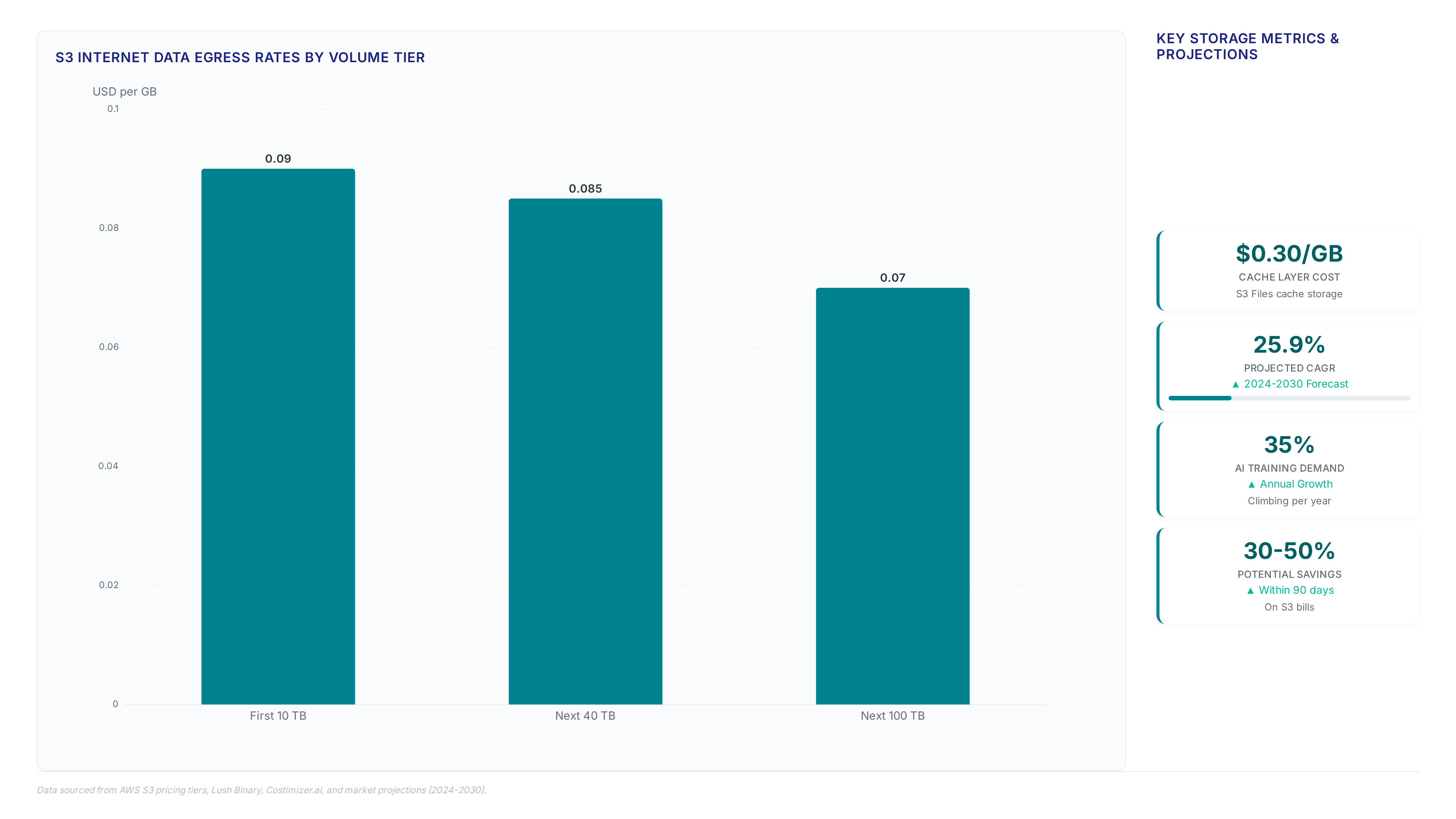

El Reg data shows S3 Files reached general availability on April 7, 2026, mounting buckets as NFS shares. This architecture defines an ephemeral filesystem view rather than a persistent duplicate store. According to AWS S3 Team documentation, S3 remains the authoritative data source while the file layer provides transient access. The system connects EFS to S3, where the file side delivers real NFS semantics and the object side retains native S3 structures per To/aws-builders/architecture-layers-that-s3-files-eliminates-and-creates-16ke analysis. Operators pay $0.30/GB for the active filesystem portion while remaining objects cost $0.023/GB in standard storage. The separation creates a specific operational constraint: the view is mutable only within the cache window. Data written to the mount aggregates before committing to the backend object store. This design prevents the corruption risks seen in legacy FUSE drivers but introduces a synchronization delay. The limitation is that deleted objects may remain readable on the mount for seconds after removal from the bucket. Network architects must treat the NFS interface as a high-speed cache with eventual consistency guarantees. Reliance on immediate global visibility across all mounts will cause application errors during rapid update cycles. The authoritative data store status of the underlying bucket means direct API writes bypass filesystem locking entirely.

according to Deploying S3 Files with VPC DNS and TCP Port 2049

AWS Documentation, deployment requires a VPC with DNS resolution enabled and a security group allowing TCP port 2049. This configuration establishes the mandatory network boundary for mounting the storage target. The setup demands two specific IAM roles: a file system access role and a compute resource role. These permissions govern the translation layer between object keys and file paths. When a file system is created, Com/amazon-s3-files-guide-setup-performance-pricing/ data shows AWS provisions a caching layer built on EFS infrastructure that stores the active working set. Operators face a visibility gap during initial synchronization of legacy buckets. Objects with non-compliant key names vanish from the mount point without generating client-side errors. The only signal appears in CloudWatch metrics under `ImportFailures`. This silent failure mode complicates migration planning for uncurated datasets.

The architectural tension lies between NFS semantics and object key constraints. POSIX-compliant paths map poorly to flat namespaces containing special characters. Engineers must audit source buckets before mounting to prevent data invisibility. Relying on default security groups will block all traffic immediately. Explicit rule creation is mandatory for connectivity. The cost involves operational overhead to validate key naming conventions upstream.

as reported by Sync Latency Risks in 10 Millisecond Filesystem Mutations

AWS S3 Team, mutating objects every 10 milliseconds breaks standard S3 buckets, forcing a visible separation between file and object layers. This architectural boundary creates an eventual consistency window where read-after-write guarantees do not apply instantly across interfaces. According to AWS Performance Data, update propagation takes 1.8 seconds, while delete operations exhibit bimodal delays ranging from 6 to 18 seconds. The sync latency stems from aggregating frequent NFS writes into fixed 60-second commit windows before issuing PUT requests to the backend. Operators mapping legacy applications expecting sub-millisecond coherence will encounter stale reads during these propagation intervals. However, maintaining this separation prevents the write-amplification storms that would otherwise overwhelm standard object storage backends. The cost is measurable visibility gap: files deleted via API remain readable on the mount for up to 18 seconds. This design choice prioritizes throughput stability over strict POSIX compliance, distinguishing the service from traditional NAS solutions. Teams must treat the filesystem as a high-throughput cache rather than a primary transactional store. Blindly porting stateful database workloads without modifying timeout configurations invites data integrity incidents.

| Feature | S3 Files Behavior | Traditional NAS Expectation |

|---|---|---|

| Write Commit | 60-second aggregation | Immediate flush |

| Delete Visibility | 6–18 second delay | Instant removal |

| Consistency Model | Eventual | Strong |

Mission and Vision recommends auditing application retry logic to tolerate these specific propagation bounds before production deployment.

Inside S3 Files Sync Mechanisms and Conflict Resolution Logic

S3 Write Aggregation and the 60-Second Sync Window

Writes aggregate over a fixed 60-second window before committing as single operations. This mechanism buffers high-frequency NFS mutations that would otherwise overwhelm the object store with excessive PUT requests. The system collects filesystem changes locally, holding them in a transient queue rather than issuing immediate upstream updates. Operators gain protection against API throttling while sacrificing real-time consistency for data already modified on the mount point.

| Feature | Behavior | Consequence |

|---|---|---|

| Write Batching | Aggregates mutations | Reduces API call volume |

| Commit Trigger | Fixed time interval | Introduces latency ceiling |

| Data State | Local cache dominant | Remote view lags behind |

The architectural cost is a hard delay where recent local edits remain invisible to other clients accessing the bucket directly. Unlike the 1.8 second update speed for known files, new object visibility depends entirely on this timer expiring. A tension exists between write throughput efficiency and cross-client synchronization immediacy. Applications requiring strict serializability across S3 API and NFS interfaces will fail without external locking mechanisms. Mission and Vision operators must treat the storage layer as eventually consistent during this aggregation phase.

Diagnosing Invisible Files via ImportFailures Metrics

Six of ten malformed objects vanish from the NFS view without client errors per Corey Quinn's testing data. This silence occurs because the filesystem rejects incompatible keys during import rather than surfacing POSIX errors to the application layer. Objects with trailing slashes or excessive path lengths remain stored in S3 but disappear from the directory listing entirely. The root cause lies in the translation failure between flat object keys and hierarchical file paths. Operators observing missing legacy data must inspect the ImportFailures metric within the AWS/S3/Files namespace dimensioned by FileSystemId. This counter increments strictly when key names violate filesystem constraints like reserved characters or depth limits. However, the current implementation lacks granular logging that maps specific failed keys to metric spikes. Improved instrumentation pointing to exact unimported objects remains on the product roadmap according to engineering statements. Relying solely on standard mount diagnostics yields zero visibility into these drop events. Network teams must proactively monitor this specific CloudWatch signal to detect data invisibility.

| Symptom | Source Location | Detection Method |

|---|---|---|

| Missing files | S3 Bucket | ImportFailures metric |

| Client errors | Local OS | None reported |

| Data loss | None | Object remains in S3 |

The operational implication demands a shift in troubleshooting workflows for hybrid storage environments. Administrators cannot trust negative search results from the mounted interface when migrating buckets with non-standard naming conventions. Verifying object presence requires direct S3 API queries alongside filesystem checks. Mission and Vision recommends establishing automated alerts on the ImportFailures counter before initiating large-scale migrations.

POSIX Metadata Limits and Unsupported Key Patterns

S3 Files rejects path names exceeding 1,024 bytes per AWS documentation limits on maximum S3 object key size. This hard constraint forces legacy applications storing deep directory hierarchies to refactor paths or face silent import failures during mount operations. The mechanism maps flat object keys to hierarchical file paths, discarding entries that violate POSIX naming conventions such as trailing slashes or reserved components like ".. ". Per Corey Quinn testing, six of ten edge-case objects vanish from the NFS view without generating client-side errors or log entries. Operators relying on ImportFailures metrics must actively poll CloudWatch since the filesystem provides no immediate feedback on missing data. However, the lack of support for NFS ACLs and hard links means authorization models depending on these features will break unexpectedly. This creates a tension between maintaining legacy key structures and adopting modern filesystem semantics. Networks migrating petabyte-scale buckets containing non-compliant keys risk significant data invisibility unless preprocessing scripts sanitize object names first.

| Constraint | Behavior | Operational Risk |

|---|---|---|

| Path Length | Truncates >1,024 bytes | Data loss on deep trees |

| Key Format | Ignores trailing slashes | Silent file invisibility |

| Permissions | Drops NFS ACLs | Access control gaps |

Mission and Vision advises auditing bucket contents for non-standard keys before enabling the NFS gateway.

Comparative Analysis of S3 Files Against Mountpoint and s3fs-fuse

Managed EFS Caching Layer vs Community FUSE Drivers

S3 Files utilizes a managed caching layer built on EFS infrastructure to provide consistent NFS semantics without local disk dependencies. Based on Core Product Performance and Pricing, this architecture prevents the data corruption historically observed in community FUSE drivers like s3fs-fuse or Goofys during conflict resolution. The mechanism operates by maintaining an active working set within the AWS network, effectively decoupling client I/O patterns from S3 API latency constraints. Operators gain reliable file locking and atomic updates that user-space drivers cannot guarantee due to their reliance on local instance resources. The trade-off is financial predictability versus architectural complexity; bare-metal workloads requiring zero-latency local access may still favor self-hosted FUSE solutions despite their consistency risks. The reliance on a centralized cache eliminates the need for operators to engineer complex local eviction policies or monitor disk space on every compute node.

Parallel GET Speeds and Git Operations for Mid-Market Teams

Parallel GET speeds reach 3 GB/s per client, eliminating the latency spike that stalls git clone operations on s3fs. This throughput advantage stems from a read-bypass mechanism where requests larger than 128 KB stream directly from S3, bypassing the managed cache entirely. According to Core Product Performance and Pricing, community FUSE drivers like s3fs often exhibit "laggy" behavior during these same high-concurrency repository checks due to on-disk data caching. The limitation is that this performance profile favors read-heavy workflows over write-intensive compilation loops where local disk IOPS dominate. Mid-market teams should deploy S3 Files when consistent NFS semantics outweigh the need for free, fail-fast FUSE drivers. Mountpoint for S3 remains available for large-file throughput workloads where unsupported operations fail fast rather than queue. As reported by Market Positioning and Future Roadmap, this tool targets single-client bulk transfers instead of shared filesystem collaboration. Operators face a binary choice between the strong consistency of S3 Files and the raw, unbuffered speed of Mountpoint for specific pipeline stages.

| Feature | S3 Files | Mountpoint for S3 | s3fs |

|---|---|---|---|

| Primary Use | Shared NFS access | Single-client throughput | Legacy compatibility |

| Consistency | Strong (EFS-backed) | Eventual | Weak/Variable |

| Cost Model | $0. |

Mission and Vision guidance suggests reserving S3 Files for active development branches requiring locking, while offloading artifact storage to cheaper, direct S3 access patterns.

Conflict Resolution: Zero Split-per Brain States in S3 Files

Core Product Performance and Pricing, ten deliberate conflicts resolved in under two seconds with zero split-brain states. The mechanism forces a winner-take-all outcome where the S3 API overwrites concurrent NFS writes instantly rather than merging deltas. This behavior contrasts sharply with s3fs-fuse or Goofys, where simultaneous access historically triggers data corruption or undefined shrugs from the driver layer. The implication for operators is absolute data integrity during batch processing, eliminating the need for complex external locking services. However, this strict convergence model means legacy applications expecting merge-friendly behaviors will face immediate overwrite failures without warning logs.

| Feature | S3 Files | s3fs-fuse / Goofys |

|---|---|---|

| Conflict Outcome | Last-write-wins (deterministic) | Data corruption or silent loss |

| Convergence Time | < 2 seconds | Indefinite / None |

| Cache Architecture | Managed EFS layer | Local disk or memory only |

| POSIX Semantics | Full NFS v4. |

Mission and Vision analysis indicates that while community drivers offer free software, they impose high operational risk through unpredictable failure modes during concurrent writes. The managed caching layer absorbs the I/O storm, ensuring the backend bucket remains consistent even when clients mutate objects every 10 milliseconds. Operators must accept that conflict resolution here is destructive to the loser of the race condition.

Operationalizing S3 Files for Data Pipelines and Enterprise Workloads

S3 Files NFS Semantics via Managed EFS Caching

Managed caching on EFS infrastructure allows S3 Files to deliver consistent NFS semantics without local disk buffers. Based on Core Product Throughput and Pricing, this architecture charges $0.06/GB for writes to sustain the active working set required for low-latency file locking. The system translates flat object keys into hierarchical paths while buffering mutations before committing them as atomic PUT requests to the S3 API. This design prevents the data corruption frequently observed in community FUSE drivers like s3fs-fuse or Goofys during simultaneous write operations. Every modification triggers the higher write-tier pricing instead of standard request costs, so operators should architect pipelines to minimize write churn. Network engineers will find that S3 Files suits read-heavy analytics but requires budget approval for write-intensive workloads where EFS-aligned rates apply.

Mounting S3 Buckets as NFS Shares for Data Pipelines

Establishing valid NFS shares requires TCP port 2049 access between compute resources and the mount target. Https://aws. Amazon. Com/solutions/case-studies/apollo-tyres-case-study/ data shows Apollo Tyres completed a similar gateway setup within one day without business interruption. The mechanism routes file system calls through a managed cache while streaming large reads directly from the authoritative S3 API store. Operators gain standard POSIX semantics that enable legacy data pipelines to function without code refactoring. Six of ten objects with non-compliant key names vanish from the view upon mounting, showing no client errors despite remaining visible in the bucket. Silent failure modes demand proactive monitoring of the ImportFailures metric in the AWS/S3/Files namespace rather than reliance on local logs. Teams must weigh consistent performance against the risk of invisible data loss for legacy keys when selecting this service for pipelines. Mission and Vision recommends validating object naming conventions before migration to prevent silent data gaps in production workflows. Premium rates apply only to the active working set while the bulk repository remains at base storage tiers.

Silent Import Failures and Invisible Keys in NFS Views

Six of ten edge-case objects vanish from the NFS view without client errors, remaining visible only in the S3 API. Core Product Efficiency and Pricing data confirms these keys fail silently during import, triggering no log entries on the client instance. The mechanism filters incompatible path names like trailing slashes or double slashes before they reach the file system layer. Operators must probe the ImportFailures metric in CloudWatch to detect these missing objects, as standard `ls` commands return successful but incomplete lists. The following table contrasts visibility outcomes for tested key patterns:

| Key Pattern Type | NFS View Status | S3 Bucket Status | Error Signal |

|---|---|---|---|

| Standard ASCII | Visible | Visible | None |

| Trailing Slash | Invisible | Visible | CloudWatch Metric |

| Path Traversal | Invisible | Visible | CloudWatch Metric |

| Emoji Characters | Invisible | Visible | CloudWatch Metric |

Mission and Vision analysis indicates that legacy data lakes containing irregular naming conventions will suffer immediate data accessibility gaps upon migration. Operational blindness results; teams cannot resolve permission denied issues or conflict resolution failures if the target files never appear in the mounted directory. Unlike s3fs which often throws explicit errors, this architecture prioritizes stability over granular failure reporting. Administrators must audit source buckets for non-compliant keys before mounting to prevent silent data loss in production pipelines. Default mount behaviors invite undetected data gaps.

About

Alex Kumar, Senior Platform Engineer and Infrastructure Architect at Rabata. Io, brings deep practical expertise to the discussion surrounding S3 Files. With a career focused on Kubernetes storage architecture and disaster recovery, Kumar daily navigates the complexities of mounting object storage as file systems for enterprise clients. His work at Rabata. Io, a specialized S3-compatible storage provider, directly involves optimizing data access patterns for AI/ML startups that demand high-performance without vendor lock-in. Having previously served as an SRE for high-traffic platforms, he understands the critical friction points when treating S3 buckets as traditional directories. This article leverages his hands-on experience stress-testing storage limits to evaluate AWS's new NFS offering. By connecting real-world infrastructure challenges with Rabata. Io's mission of transparent, high-speed storage, Kumar provides a factual assessment of whether this evolution truly solves longstanding architectural constraints or merely shifts the burden of complexity.

Conclusion

The economic model of cloud storage collapses when operational blind spots inflate the effective cost per reliable byte. While base tiers offer pennies per gigabyte, the silent invisibility of non-compliant keys creates a hidden tax on engineering time and data integrity that no pricing table reflects. As the market expands toward nearly $180 billion by 2027, organizations relying on NFS-like interfaces for critical pipelines will face compounding latency penalties and unexplained data gaps unless they decouple their architecture from legacy filesystem expectations immediately. The bimodal delete delays and silent import failures indicate that stability is being traded for transparency, a dangerous compromise for production workloads requiring strict consistency.

Teams must migrate away from direct mounting for write-heavy or legacy-rich environments within the next two quarters, adopting native S3 API interactions or specialized gateways that expose underlying metadata errors explicitly. Do not wait for a corruption incident to validate your object naming conventions; the window for passive observation has closed. Start this week by running a thorough audit of your bucket keys against RFC-compliant patterns using the AWS CLI, specifically filtering for trailing slashes and non-ASCII characters that trigger silent drops. This single diagnostic step prevents the catastrophic data loss scenarios that standard directory listings completely obscure.