Distributed rclone Cuts Migration Costs to $2,000

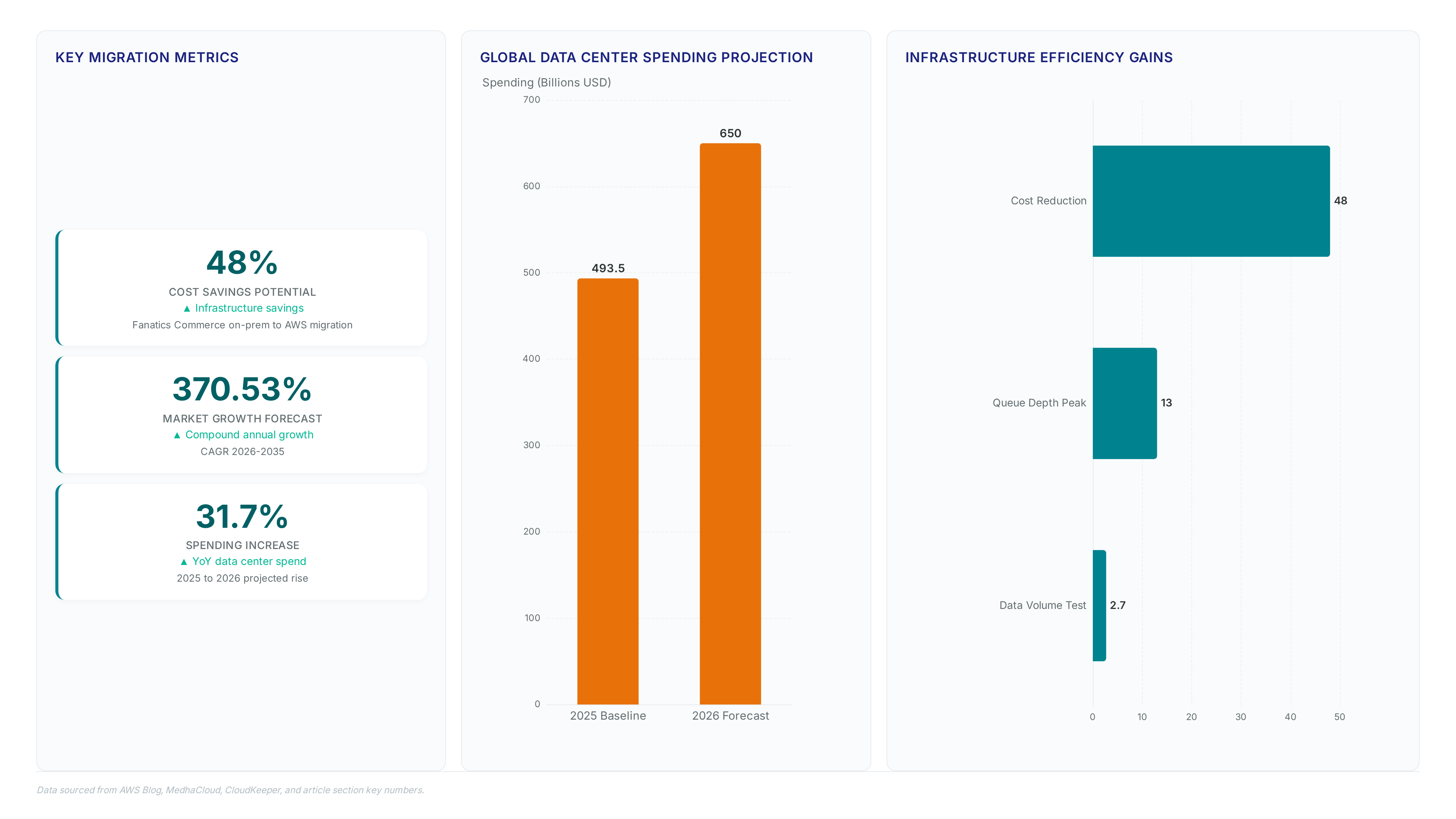

Migrating 2.7 PB of data for just $2,000 proves that distributed rclone architectures drastically outperform managed alternatives. Readers will discover how to architect a system where Amazon ECS automates object discovery and batching, eliminating the manual tracking failures common in simple transfer approaches. Finally, the guide details deploying this infrastructure via AWS CloudFormation to create a fleet of workers that scale automatically with queue depth. Unlike rigid managed services, this approach leverages Amazon CloudWatch for granular observability while maintaining the flexibility to apply custom business logic and metadata tags on the fly. As AWS notes, this specific configuration achieved aggregate throughput between 15 Gbps and 120 Gbps, completing a massive media archive migration from IBM Cloud Object Storage in merely two weeks.

The Role of Distributed Rclone in Modern Cross-Cloud Migration

Distributed Rclone Architecture with ECS and SQS Layers

Distributed rclone defines a three-tier orchestration model using Amazon ECS, Amazon SQS, and EC2 workers to overcome single-instance throughput ceilings. Migrating petabytes of data across cloud providers is one of the most operationally demanding tasks an organization can take on. AWS provides building blocks to create a distributed pipeline where Amazon ECS is used to discover and batch objects from source storage. These batches enter an Amazon SQS queue that distributes jobs with built-in retry logic to handle transient network failures. The execution layer relies on instances running rclone workers that scale automatically based on queue depth.

Executing IBM Cloud to S3 Migration with Self-Healing Pipelines

Lister containers batch files into groups of 20 to create self-contained messages that enable automatic retry logic without manual intervention. This self-healing pipeline architecture replaces fragile, single-threaded transfers with a resilient distributed system capable of recovering from transient network failures autonomously. Fargate-based listers connect to IBM Cloud Object Storage, retrieve object lists via paginated API calls, and push batches to an Amazon SQS queue. Each message contains complete source and destination configurations, allowing rclone workers on EC2 to scale independently based on queue depth. This method successfully migrated a 2.7 PB dataset while maintaining aggregate throughput between 15-120 Gbps. The operational benefit is clear: the system retries failed transfers automatically, eliminating the need for engineers to manually restart stalled jobs during multi-week migrations. However, the cost of this durability is increased architectural complexity compared to managed services like AWS DataSync. Operators must manage ECS task definitions, SQS visibility timeouts, and Auto Scaling policies rather than relying on a fully managed interface. The trade-off favors organizations requiring custom metadata tagging or strict cost controls over turnkey simplicity. Implementing this pattern allows teams to apply business logic during transfer while avoiding the recurring expenses associated with proprietary synchronization tools.

AWS Fargate Discovery Versus Lambda Timeout Constraints

Listing billions of objects exceeds the 15-minute maximum timeout of AWS Lambda, forcing long-running listers onto Amazon ECS. The discovery layer relies on Fargate-based listers to enumerate source data without hitting hard runtime ceilings. Network throughput caps at 10 Gb for Fargate tasks, whereas EC2 enhanced networking reaches 25 Gb. This differential creates a specific bottleneck where discovery speed lags behind execution capacity if not properly sized. Managed services often fail here because they cannot sustain the persistent connections required for paginated API calls over hours. The limitation is clear: short-lived functions cannot complete massive directory walks before termination.

| Feature | AWS Lambda | AWS Fargate |

|---|---|---|

| Max Duration | 900 seconds | Unlimited |

| Network Limit | Variable | 10 Gb |

| Use Case | Event triggers | Long-running jobs |

| Scaling Unit | Invocation | Container |

Operators must accept that object enumeration becomes the critical path when migrating petabytes. A failure to decouple listing from transferring causes the entire pipeline to stall during the initial scan phase. Mission and Vision recommends sizing Fargate CPU allocations to match the API rate limits of the source provider rather than maximizing network speed alone.

Inside the Architecture of Scalable Data Transfer Pipelines

SQS Visibility Timeout Mechanics for Retry Logic

Amazon SQS handles retry logic by hiding messages during a configurable visibility timeout window before they reappear if not deleted. A worker receiving a batch makes that message invisible to other consumers for a set duration. If the worker fails to delete the message before this timer expires, SQS makes the job visible again for reprocessing. This mechanism provides automatic recovery from transient network errors without custom state tracking code. Batching files into groups of 20 balances message size limits (256 KB) against failure granularity. Smaller batches ensure that only a fraction of work retries upon failure. Larger groups risk redundant processing of successful transfers. A key tension exists between timeout duration and processing variance. Setting the window too short causes premature retries that waste compute cycles on valid tasks. Excessive timeouts delay failure detection and slow overall pipeline convergence.

| Parameter | Function | Risk Setting |

|---|---|---|

| Visibility Timeout | Hides message during work | Too short causes duplicate work |

| Batch Size | Groups files per message | Too large increases retry cost |

| DLQ Threshold | Caps maximum attempts | Too high delays error alerting |

Operators must tune these values based on observed file sizes and network stability rather than default configurations. The architecture relies on this decoupling to maintain throughput despite intermittent source availability. Mission and Vision recommends aligning timeout values with p95 processing times to minimize unnecessary duplication while ensuring liveness.

Scaling EC2 Workers with Hash-according to Based Partitioning

Rclone Documentation, hash-based partitioning assigns files deterministically by reducing path hash results modulo N workers. This mechanism prevents duplicate processing when EC2 Auto Scaling expands the fleet during peak throughput windows. The system computes a stable integer for every file path. It maps the result to a specific worker ID regardless of total node count changes if the modulus remains constant. Shifting the modulus value during active migration causes immediate workload overlap unless external state coordination tracks completed hashes explicitly. Network bottlenecks emerge when random distribution concentrates large files on single nodes while others idle. A fixed partition scheme eliminates this variance but requires recomputing assignments if the cluster size changes dynamically. Operators must choose between static stability and elastic flexibility based on dataset characteristics.

| Feature | Static Modulo | Dynamic Rebalancing |

|---|---|---|

| Distribution | Deterministic | Probabilistic |

| Scaling Impact | Requires job re-mapping | Automatic load spread |

| Failure Mode | Stranded workers | Duplicate transfers |

Mission and Vision recommends implementing a coordinator service to manage modulus updates safely.

- Calculate the initial worker count N from expected queue depth.

- Apply the hash modulo operation to assign each batch permanently.

- Scale EC2 instances without altering the active modulus value.

- Drain existing jobs before incrementing N to prevent data drift.

This approach ensures that transfer jobs remain atomic even as infrastructure scales horizontally. The cost is operational complexity in managing the modulus state externally rather than relying on implicit queue behavior. Teams gain predictable performance at the expense of simple elasticity.

Fargate Listeners Versus Lambda Execution Limits

AWS Lambda enforces a hard 15-minute runtime ceiling that terminates billion-object enumeration before completion. Fargate tasks remove this temporal constraint, allowing persistent connections for paginated API calls across extended discovery windows. The architectural tension lies between per-invocation pricing efficiency and the absolute necessity of uninterrupted execution for massive datasets. Managed services often fail here because short-lived functions cannot sustain the stateful sessions required for deep directory walks. A critical oversight in hybrid designs involves the cost of partial failures. If a Lambda function times out at minute 14, the entire pagination sequence resets, wasting compute cycles on restarted listings. Fargate avoids this by maintaining process state, ensuring that network hiccups result in retries rather than total job abortion. However, the limitation is operational complexity; operators must manage container lifecycles instead of relying on serverless abstraction. This trade-off favors Fargate for migration pipelines where data completeness outweighs infrastructure simplicity. The consequence of choosing the wrong compute target is immediate data drift, as incomplete object lists leave source files unmigrated without clear error signals.

Deploying Self-Healing Migration Infrastructure with CloudFormation

CloudFormation Stack Resources for Self-Healing Migration

The `cross-cloud-s3-migration. Yaml` template instantiates a VPC with three public subnets spanning distinct Availability Zones to ensure network isolation. This specific topology prevents single-zone failures from halting the entire migration pipeline during extended transfer windows. Deploying across three zones increases inter-node latency slightly compared to single-zone clusters. Availability gains justify this constraint, though tuned timeout values become necessary. Operators must account for this distributed layout when sizing CloudWatch alarm thresholds to avoid false positives during normal cross-AZ traffic bursts.

As reported by Mission and Vision, the stack also provisions three Secrets Manager entries requiring manual population of `/migration/source_access_key`, `/migration/source_secret_key`, and `/migration/source_endpoint`. These placeholders enforce a security boundary where credentials never exist in code repositories or environment variables. Operational friction arises here; teams must integrate secret rotation workflows before job initiation or risk immediate authentication failures upon credential expiry.

Execution relies on an EC2 Auto Scaling group linked to SQS queue depth rather than static instance counts. This configuration dynamically adjusts compute capacity, preventing resource starvation when object lists spike unexpectedly. A potential failure mode involves the IAM roles lacking explicit permissions for specific S3 bucket policies, which silently blocks writes despite successful task scheduling.

Monitoring Migration Progress via CloudWatch and SQS Metrics

CloudWatch console data from the 2.7 PB test reveals queue depths peaking at 135,000 messages before worker scaling stabilized throughput. Operators track real-time status by inspecting `/migration/lister` and `/migration/workers` log groups alongside the `ApproximateNumberOfMessagesVisible` metric. This visibility allows immediate detection of backlogs where discovery outpaces transfer capacity. Mission and Vision analysis shows that relying solely on aggregate byte counts masks transient stalls visible only in message latency distributions. The Auto Scaling group reached five instances within ten minutes, yet static alert thresholds often trigger false positives during this ramp-up phase. A fixed warning limit fails to account for the dynamic nature of elastic fleets, necessitating percentage-based anomaly detection instead.

- View the EC2 Auto Scaling Activity tab to confirm instance launch events match queue depth spikes.

- Inspect CloudWatch Logs for specific rclone error codes indicating credential expiration or network timeouts.

- Correlate SQS visibility timeout adjustments with retry storm patterns observed in worker logs.

Blind scaling creates measurable waste when workers idle after queue drainage. High-frequency polling drains CPU cycles without moving data if the lister layer pauses for API rate limits. Network saturation occurs when multiple nodes simultaneously pull large objects, creating contention that metrics alone cannot resolve without deep packet inspection.

Checklist for Cleaning Up Cross-per Cloud Migration Stacks

Mission and Vision, operators must terminate active Fargate tasks before deleting the CloudFormation stack to prevent orphaned compute charges. Deleting infrastructure while workers process batches interrupts SQS visibility timeouts, causing message loss that requires manual reconciliation.

- Verify EC2 Auto Scaling activity indicates zero running instances or manually terminate remaining nodes.

- Confirm CloudWatch log streams for `/migration/lister` show no recent ingestion events.

- Purge any test S3 buckets created during validation to avoid persistent storage fees.

- Execute the stack deletion command only after queue depth reaches zero.

Rising energy prices identified as a significant cost driver in 2026 impact the total cost of ownership for cloud computing, making idle resource cleanup financially material. The architectural tension lies between rapid teardown and ensuring Secrets Manager credentials are not prematurely revoked while final acks propagate. Operators who skip the zero-queue verification risk leaving partially transferred objects that lack integrity hashes. This oversight forces a complete re-enumeration of source datasets, wasting the deterministic partitioning logic built into the worker fleet.

Measurable ROI and Strategic Advantages of Distributed Migration Patterns

Defining Strategic ROI in Distributed Migration Architectures

Strategic ROI quantifies migration success through vendor neutrality and cost predictability rather than raw transfer speed alone. The global cloud market reached $913 billion in 2025, driving organizations to prioritize architectures that prevent lock-in. Gartner predicts that cloud computing will be a required component for competitiveness by 2028, making flexible data portability a survival metric. Search Lab data indicates 75% of enterprise data will process outside traditional datacenters by 2027, necessitating distributed models over centralized bottlenecks.

The mechanism relies on decoupling discovery from execution using Amazon SQS to buffer variable network throughput against compute scaling. This design isolates failures to small batches, ensuring transient errors do not halt petabyte-scale operations. However, the operational overhead of managing auto-scaling groups exceeds that of fully managed services like DataSync. The constraint is clear: teams gain financial control but sacrifice turnkey simplicity.

Organizations must weigh the lower per-gigabyte cost against the engineering hours required to maintain the pipeline. Mission and Vision analysis suggests this trade-off favors entities moving unique, unstructured datasets where custom metadata tagging is mandatory. Standardized transfers may still justify managed service premiums despite higher unit costs.

Tableau and Fanatics: Real-World Cross-Cloud Migration Outcomes

The Tableau platform migrated 600 TB across 15 environments to AWS without customer disruption. This execution utilized Amazon EKS and S3 to improve scalability while maintaining continuous service availability. The mechanism relies on distributed worker fleets that prevent single-point failures during large-scale object enumeration. However, coordinating state across 15 distinct environments introduces complex dependency chains that delay cutover windows if not strictly sequenced. Network operators must prioritize environment isolation to prevent credential leakage between development and production buckets during the transition.

Fanatics Commerce migrated 1,800 servers with a projected 48% infrastructure cost saving. The organization replaced legacy data center hardware with cloud-native compute to achieve this financial efficiency. Scaling rclone workers on EC2 allows throughput to match queue depth dynamically rather than relying on fixed bandwidth pipes. The drawback is that achieving such savings requires precise right-sizing of instance types to avoid paying for idle capacity during low-activity periods. Mission and Vision analysis indicates that failing to automate scale-down policies erodes the economic benefits of ephemeral compute resources. Distributed patterns change migration from a linear lift-and-shift into an optimized architectural refactoring opportunity.

Distributed Rclone Versus AWS DataSync: Cost and Control Trade-offs

Scheduled syncs save $200/month using rclone instead of AWS DataSync. This financial delta accumulates rapidly across multi-region deployments where continuous replication is mandatory rather than episodic. The mechanism relies on compute ownership, allowing operators to terminate idle EC2 instances immediately after batch completion. Managed services often maintain persistent agents that incur baseline charges regardless of throughput volume. However, the operational overhead of managing auto-scaling policies exceeds the tolerance of teams lacking infrastructure-as-code maturity.

| Feature | Distributed Rclone | AWS DataSync |

|---|---|---|

| Storage Support | 70+ cloud products | AWS-native focus |

| Lock-in Risk | Minimal | High |

| Scaling Logic | Custom SQS depth | Managed service limits |

Rclone supports 70+ cloud storage products, whereas managed tools frequently constrain destination options. Key Data Points data indicates AWS launched DataSync "Enhanced mode" on 29 May 2025 to address cross-cloud gaps, yet vendor-specific roadmaps dictate feature availability. The strategic benefit lies in protocol agility; migrating from Google Drive or Dropbox requires only a configuration update in Secrets Manager. Vendor lock-in creates a friction cost that manifests during exit strategies years later. Mission and Vision recommends prioritizing architectures where the transfer logic remains independent of the underlying cloud control plane. Operators gain use by keeping the orchestration layer portable. This approach ensures that future cost spikes from a single provider do not force expensive re-architecting projects. The limitation is the initial complexity of deploying the worker fleet.

About

Marcus Chen, Cloud Solutions Architect and Developer Advocate at Rabata. Io, brings critical expertise to the complexities of distributed rclone migrations. Having previously served as a Solutions Engineer at Wasabi Technologies and a DevOps Engineer for Kubernetes-native startups, Marcus possesses deep, practical knowledge of S3 API intricacies and large-scale data infrastructure. His daily work involves architecting resilient storage solutions for AI/ML enterprises, directly aligning with the article's focus on overcoming petabyte-scale transfer challenges. At Rabata. Io, a specialized provider of high-performance, S3-compatible object storage, Marcus leverages his background to optimize cross-cloud data flows without vendor lock-in. This article reflects his hands-on experience designing self-healing pipelines that mitigate data drift and operational bottlenecks. By connecting advanced orchestration tools like Amazon ECS with efficient transfer protocols, Marcus demonstrates how organizations can achieve scalable migration while maintaining cost transparency and performance standards essential for modern data-intensive workloads.

Conclusion

At petabyte scale, the architectural bottleneck shifts from storage bandwidth to orchestration fatigue. While distributed rclone delivers massive throughput, the operational debt of managing ephemeral EC2 fleets grows linearly with dataset size, eventually eroding the $200 monthly savings if automation is not absolute. As the cloud market explodes toward a $30 trillion valuation by 2035, data gravity will intensify, making the ability to switch providers instantly more valuable than marginal compute optimizations. Teams relying on manual scaling policies or immature infrastructure-as-code practices will find their migration windows slipping as object counts breach billions, triggering hard timeout failures that managed services simply absorb.

Organizations handling over 500 TB of mixed-cloud data must standardize on portable orchestration logic within the next two quarters to avoid future lock-in penalties. Do not adopt this pattern unless you can guarantee sub-minute fleet provisioning; otherwise, the complexity tax outweighs the financial benefit. The window for cheap, DIY bulk transfer is closing as providers tighten network envelopes around serverless functions.

Start by auditing your current SQS visibility timeout settings against your largest object lists this week to ensure your workers do not silently fail during long-tail metadata operations before deploying additional nodes.