Apache Iceberg direct query cuts latency now

Stop moving petabytes of data just to query them; Amazon Quick now targets Apache Iceberg directly. The thesis is clear: inserting intermediate warehouses into modern lakehouse architectures creates unnecessary latency and cost that Direct Query modes eliminate. By integrating S3 Tables as a native source, AWS allows enterprises to treat their data lake as the single source of truth without replicating storage.

This shift addresses the core inefficiency where organizations traditionally migrate data into proprietary OLAP systems before analysis. Instead of relying solely on SPICE for acceleration, architects can now use Direct Query capabilities specifically tuned for open table formats. This eliminates the operational overhead of maintaining duplicate datasets while preserving the governance required for enterprise analytics.

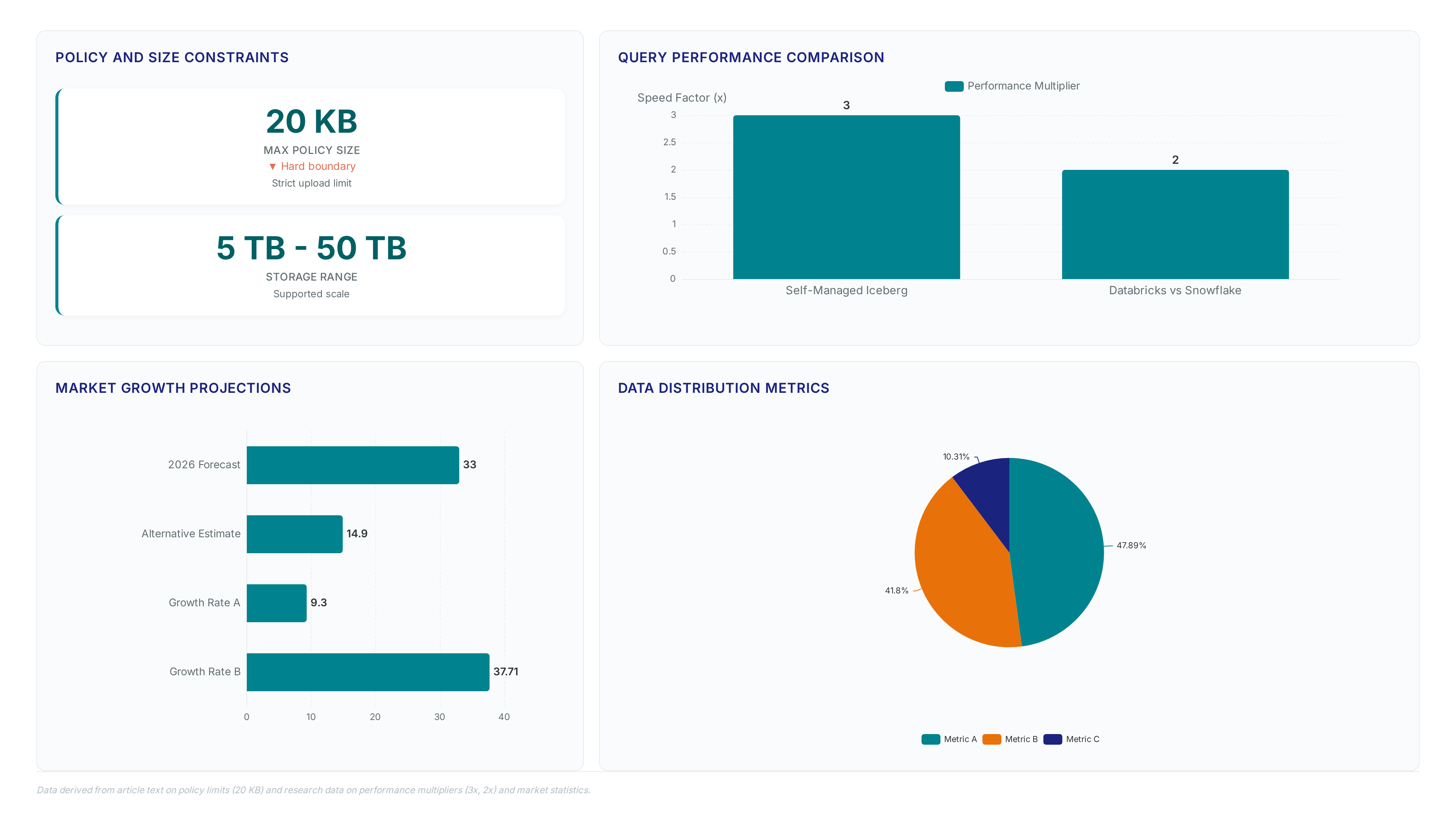

Readers will learn how this integration streamlines architecture by removing distinct warehouse layers, enables near real-time insights by minimizing pipeline dependencies, and scales performance across massive datasets without curation bottlenecks. As MarketsandMarkets predicts the cloud analytics sector will reach $41.33 billion by 2031, the pressure to optimize these underlying data paths intensifies. The era of redundant data movement for the sake of query speed is ending, replaced by direct access patterns that align with the economic realities of scaling AI-driven decision intelligence in 2026.

The Role of S3 Tables in Modern Data Lake Architecture

Amazon S3 Tables and Apache Iceberg Format Set

Amazon S3 Tables represent a distinct bucket type engineered specifically for the Apache Iceberg open table format. Documentation at Aws. Amazon. Com/AmazonS3/latest/userguide/s3-tables. Html confirms this design matches standard object storage durability while optimizing scalability for tabular workloads. Raw object lakes demand manual file handling, yet the table bucket abstraction manages compaction and snapshot cleanup automatically. This structural change removes the heavy operational lift usually required to maintain Apache Iceberg tables across large fleets. Service availability began at AWS re:Invent in 2024, signaling a strategic move toward native lakehouse integration. The metadata layer defines the difference; standard buckets hold unstructured objects, but S3 Tables enforce schema evolution and ACID transactions natively. Teams gain direct query access without replicating data into proprietary warehouse formats. Tight coupling introduces constraints though; migrating away from the Apache Iceberg system grows difficult once native optimizations lock specific file layouts in place. Reduced pipeline complexity arrives with vendor-specific implementation details embedded within the bucket structure.

Mission and Vision emphasizes that this definition fundamentally alters how enterprises treat the data lake as a single source of truth. Direct querying removes the latency inherent in ETL batches.

Streamlining Architecture with Direct Query and SPICE Modes

Direct Query and SPICE modes allow Amazon Quick to consume Apache Iceberg tables straight from an S3 table bucket. Organizations can treat the data lake as a single source of truth because intermediate data warehouses become unnecessary. The SPICE engine accelerates in-memory calculation, whereas Direct Query touches live storage without replication. Confirms this architecture eliminates complex data movement pipelines previously required for real-time analytics. Direct file control in S3 differs sharply from proprietary storage systems prioritizing SQL performance over raw access. Operators select immediate freshness via Direct Query or aggregated speed via SPICE depending on latency tolerance. Market projections indicate the cloud analytics sector will reach $41.33 billion by 2031, driving demand for such unified structures. Agentic AI adoption in enterprise software is expected to jump from 1% to 33% by 2028. This surge necessitates architectures where AI agents query governed Iceberg tables without ETL bottlenecks. AnyCompany Corp utilized this pattern to analyze streaming transaction events for fraud detection instantly. The shift consolidates analytics and decision intelligence into one governed experience.

Mission and Vision recommends deploying Direct Query for streaming ingestion paths requiring current state visibility.

Automated Maintenance in S3 Tables vs Self-Managed Iceberg

Data from Loka. Com/blog/test-driving-s3-tables shows automated maintenance executes compaction, snapshot cleanup, and orphan file deletion without user intervention. This mechanism merges small files internally to optimize query performance while deleting unused data versions. Evidence indicates that self-managed Apache Iceberg deployments require operators to script these tasks manually or rely on external orchestration tools. Reduced granular control over the exact timing of file consolidation represents the limitation compared to custom cron jobs. Network engineers gain storage efficiency but lose specific scheduling agency for maintenance windows. Operators must choose between managed convenience and manual precision when architecting lakehouse storage layers.

The architectural tension lies between reducing operational toil and maintaining absolute control over storage lifecycle events. Most organizations prioritize the elimination of manual compaction tasks to prevent small-file degradation. Teams with strict compliance windows may find automated background processes unpredictable during peak ingestion cycles. This constraint forces a decision between accepting vendor-managed timing or retaining full procedural authority.

Mission and Vision recommends evaluating workload volatility before delegating file management to the platform layer.

Inside the Query Engine: Direct Query Mode versus SPICE

Direct Query Mode Mechanics for S3 Tables

Direct Query translates visualizations into optimized SQL against live Apache Iceberg tables without data duplication, according to AWS documentation. The engine parses user requests and pushes computation filters directly to the storage layer, reading Parquet files from the table bucket via standard REST APIs. This mechanism avoids the latency of extracting and loading data into a separate columnar store. Performance gains reach up to 3x faster query speeds compared to self-managed setups per AWS research data. Every visualization refresh triggers a fresh network request to S3, consuming bandwidth that cached engines avoid. Network architects must provision sufficient throughput between QuickSight compute nodes and S3 endpoints to prevent latency spikes during concurrent user sessions. Operators gain immediate access to the latest transaction events but sacrifice the sub-second response times of in-memory caches. Mission and Vision recommends this mode for workloads requiring strict data sovereignty where moving copies violates governance policies. Reliance shifts to underlying network path stability rather than internal engine speed.

Real-Time Fraud Detection with Kinesis and Iceberg

Dashboard interactions translate into immediate SQL against Apache Iceberg tables, rendering live transaction streams visible through Direct Query mode. AnyCompany Corp. Ingests payment events via Amazon Kinesis Data Streams to populate an S3 table bucket. This architecture bypasses batch ETL delays, allowing analysts to visualize regional fraud spikes within minutes of occurrence. Automated compaction merges small Parquet files to increase read throughput by up to 3x compared to unoptimized lakes, based on AWS research data. The mechanism relies on pushing computation filters directly to storage rather than copying data into a proprietary engine. Visualization refreshes consume network bandwidth between the analytics service and the S3 endpoint, creating a dependency on internal throughput capacity. Operators must provision sufficient connectivity to sustain concurrent user sessions without latency degradation. Absolute data currency conflicts with query response time under heavy load. Direct access guarantees the latest fraud signal but exposes the pipeline to variable network conditions. Teams requiring sub-second interactivity for high-volume dashboards may still prefer aggregated caches for specific views. Mission and Vision recommends reserving Direct Query for investigative workflows where freshness outweighs raw speed requirements.

Mechanics: Performance Gains: S3 Tables vs Self-Managed Iceberg

Query performance improves by 3x with S3 Tables over self-managed Apache Iceberg implementations. Automated compaction merges small files into larger, query-optimized ranges without manual intervention, driving this acceleration. The mechanism pushes computation filters directly to the storage layer, reducing total I/O requests notably. Throughput gain depends entirely on the underlying network bandwidth between compute nodes and the table bucket. Operators ignoring this dependency face latency spikes despite storage-side optimizations. The architecture supports 10x higher transactions per second by eliminating metadata bottlenecks. Self-managed clusters often stall during concurrent writes due to uncoordinated snapshot management. Direct Query mode exposes these live transactional improvements immediately to Amazon Quick dashboards. Reduced control over exact compaction timing exists compared to custom cron jobs. Network engineers must prioritize consistent low-latency connectivity over raw storage speed to realize these gains. Failure to provision adequate bandwidth negates the storage-layer efficiency improvements entirely.

Strategic Advantages of S3 Tables for Enterprise Analytics

Defining S3 Tables Strategic Value for Enterprise Analytics

Market analysis reveals S3 Tables apply open Apache Iceberg formats on customer-owned storage with automated maintenance. This architectural shift distinguishes the service from standard object storage by embedding table semantics directly into the table bucket. Operators gain a governed, AI-ready asset without migrating data into proprietary warehouses controlled by Snowflake or Databricks. The mechanism merges small files and cleans orphaned snapshots internally, removing the operational burden of manual compaction scripts. Poorly written queries in competitor platforms often consume notably more credits than optimized versions found in consumption-based models. Cost predictability relies heavily on query optimization because uncontrolled scans incur variable charges unlike fixed-capacity clusters. Network architects must implement strict governance policies to prevent budget overruns from ad-hoc analytical workloads. A choice emerges between flexible scaling and predictable monthly billing cycles.

| Capability | Standard S3 | S3 Tables |

|---|---|---|

| Format | Objects | Apache Iceberg |

| Maintenance | Manual | Automated |

| Query Target | External Engines | Native Direct Query |

Deployment fits scenarios where real-time insight outweighs the need for rigid cost caps.

Applying S3 Tables for Cost-Efficient Mid-Size Team Analytics

A midsize team might spend an estimated $36,000 annually on Snowflake compared to $28,000 on Databricks. S3 Tables alter this fixed-cost model by operating on a variable pay-per-GB and request basis with storage starting at approximately $0.023 per GB. The mechanism charges strictly for scanned data and metadata operations rather than reserved compute capacity, aligning expenses directly with query volume. This structure benefits teams with sporadic analytics needs but creates budget uncertainty for continuous, high-volume workloads. Network architects weigh the risk of unpredictable monthly bills against the potential savings from eliminating idle warehouse costs.

EOS Group migrated its data to a centralized data lake on Amazon S3 using AWS Database Migration Service (DMS) to reduce infrastructure costs by 50%. Operators should select Direct Query mode when freshness outweighs latency tolerance, while reserving SPICE for repetitive dashboarding requiring sub-second response times. The choice dictates whether the network bears the burden of real-time data transfer or absorbs the memory cost of in-memory caching.

Initial exploration phases with wildly fluctuating data volumes suit Direct Query deployment. Fixed competitor pricing penalizes growth linearly, whereas variable models scale gracefully until throughput thresholds trigger re-evaluation.

Comparing S3 Tables Performance Against Snowflake and Databricks

S3 Tables apply open Apache Iceberg formats unlike proprietary storage in Snowflake or Databricks. This architectural distinction allows direct file control within the customer's S3 bucket while competitors prioritize managed SQL layers over raw access. Snowflake obscures underlying files to optimize internal compression, whereas Databricks locks ACID transactions behind its Delta Lake format. Operators manage their own governance policies on the open files rather than relying on vendor-enforced boundaries. Direct access enables cost models based on actual storage usage rather than opaque compute credits.

| Feature | S3 Tables | Snowflake | Databricks |

|---|---|---|---|

| Storage Format | Apache Iceberg | Proprietary Columnar | Delta Lake |

| File Control | Direct User Access | Vendor Managed | Direct via Delta |

| Primary Lock-in | None (Open) | High | Medium |

Query patterns require evaluation before migrating legacy workloads to open formats. Fixed-credit platforms often penalize unoptimized joins with exponential cost increases regardless of storage efficiency. Open formats demand rigorous file sizing discipline to prevent small-file degradation during high-frequency writes. Teams lacking strict data engineering governance may find the freedom of open tables leads to performance decay without automatic tuning.

Implementing S3 Table Connections in Five Steps

Defining S3 Table Bucket Access Policies and Size Limits

Policy validation occurs instantly during upload, rejecting any access policies exceeding a strict 20 KB maximum size limit. This hard boundary forces network architects to draft concise JSON structures, as verbose definitions frequently trigger rejection in complex multi-tenant environments. Attempting to mirror granular on-premise ACLs often leads to deployment failures because bloated condition statements push the byte count past the set threshold. Operators must group principals effectively rather than listing individual ARNs to stay within bounds.

- Navigate to the Permissions tab within the Manage account interface.

- Draft the policy ensuring the total character count remains well below the 20 KB ceiling.

- Apply the policy to the specific table bucket using the AWS CLI or console.

Silent connection failures plague Amazon Quick when teams overlook this specific size cap while resolving data sources. The table bucket architecture prioritizes low-latency parsing over policy complexity, differing notably from standard storage buckets that allow larger metadata attachments. Such design choices create friction between fine-grained security controls and successful service integration. Auditing existing IAM roles for bloat before migration prevents unexpected connectivity outages.

Configuring Amazon QuickSight Permissions for S3 Table Discovery

Selecting Manage account initiates the workflow required for Amazon Quick to begin automatic S3 Tables discovery. This single action exposes Iceberg-based assets without requiring manual path entry or guesswork regarding ARN structures. Skipping this step renders the data source invisible during creation, frustrating operators who cannot locate their tables. The mechanism relies on explicit allow-listing to maintain least-privilege security postures rather than depending on broad bucket policies. Enabling auto-discovery across hundreds of buckets increases the attack surface should an account compromise occur. Limiting selection to specific analytical domains protects organizational roots from unnecessary exposure.

- Navigate to Permissions and AWS Resources within the account settings menu.

- Select Amazon S3 Tables under the section allowing access and auto discovery.

- Click Select S3 table buckets to open the resource picker interface.

- Choose the target bucket, such as s3table-datasamples, then click Finish.

- Verify the Amazon S3 bucket option remains selected before choosing Save.

Mission and Vision recommends isolating these permissions to dedicated service roles instead of relying on root credentials. A cleaner audit trail emerges when every discovery event maps to a specific service identity. Failure to restrict scope often leads to accidental exposure of sensitive financial tables to general bi users. Precision in selection prevents the "noise" of irrelevant datasets from cluttering the semantic layer.

Validating Connection Requirements and Cleanup Procedures

A streaming pipeline containing data in an Amazon S3 table bucket acts as a mandatory prerequisite before connection attempts commence. This requirement ensures the Apache Iceberg format exists at the storage layer for immediate query resolution. Amazon QuickSight cannot establish the necessary metadata handshake without this foundation, resulting in immediate timeout errors during source creation. Operators often assume empty buckets suffice for configuration tests, overlooking the dependency on active ingestion flows. Validating Kinesis or Firehose output first prevents wasted troubleshooting cycles on permission sets.

- Verify the Amazon QuickSight Enterprise subscription status data shows requirement.

- Confirm data presence in the target table bucket via CLI or console.

- Execute resource deletion scripts post-analysis to stop billing accumulation.

Idle capacities run indefinitely if users fail to unsubscribe from the Amazon QuickSight account. Data shows that users must delete all related resources and unsubscribe if tools remain unused. This cleanup step prevents surprise invoices generated by lingering compute containers or scheduled refresh engines. Neglecting this phase converts a temporary analytical task into a permanent fixed cost burden. Operational discipline dictates treating analytics sessions as ephemeral constructs rather than persistent services.

About

Alex Kumar, Senior Platform Engineer and Infrastructure Architect at Rabata. Io, brings deep technical expertise to the discussion on Amazon S3 Tables and AI-ready analytics. With a specialized background in Kubernetes storage architecture and cost optimization for cloud-native applications, Alex understands the critical infrastructure challenges organizations face when scaling data lakes. His daily work involves designing reliable, high-performance storage solutions that directly parallel the efficiency gains offered by modern table formats like Apache Iceberg. At Rabata. Io, a provider of fast, S3-compatible object storage, Alex helps enterprises eliminate vendor lock-in while maximizing throughput for AI and ML workloads. This practical experience allows him to articulate how transitioning to optimized table structures can significantly reduce latency and costs. By connecting theoretical architectural benefits with real-world implementation strategies, Alex provides valuable insights for teams aiming to bridge the gap between raw data storage and actionable, agentic AI intelligence.

Conclusion

The shift to decoupled storage-compute architectures reveals that governance complexity scales faster than data volume, not slower. While unit economics favor direct object storage, the operational tax of managing Iceberg metadata across distributed teams creates a hidden bottleneck that pure cost calculators ignore. As the market expands toward real-time demands, organizations relying on manual cleanup scripts and ephemeral session logic will face audit failures when scaling from pilot projects to enterprise-wide deployment. The true breakage point isn't bandwidth; it's the inability to enforce consistent identity mapping without centralized policy engines.

Adopt this architecture immediately if your team manages over 50TB of semi-structured data and requires sub-minute freshness, but defer if your primary constraint is legacy ETL dependency rather than storage cost. Do not migrate batch-only workloads expecting linear savings; the ROI only materializes when query concurrency exceeds traditional warehouse thresholds. You must treat metadata management as a first-class citizen, not an afterthought of storage configuration.

Start by auditing your current QuickSight subscription status and idle compute containers this week to eliminate immediate billing leakage before layering new ingestion pipelines. This single action isolates waste from genuine architectural needs, providing a clean baseline for migration.